- Customer Community

- Customer Community Knowledge Base

- Syndigo Enhanced Content Knowledge Base

- Syndigo Enhanced Content

- A/B Testing Feature Walkthrough

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

4 weeks ago - edited 4 weeks ago

Article Contents:

- Before You Begin

- Entry Points and References

- Build an Experiment

- Experiment Begins

- Experiment In Progress

- Experiment Results Ready for Review

- Experiment Conclusion: Mark as Complete and Other Available Actions

- Export Experiment Data

Before You Begin

Important information about Enhanced Content A/B Testing feature

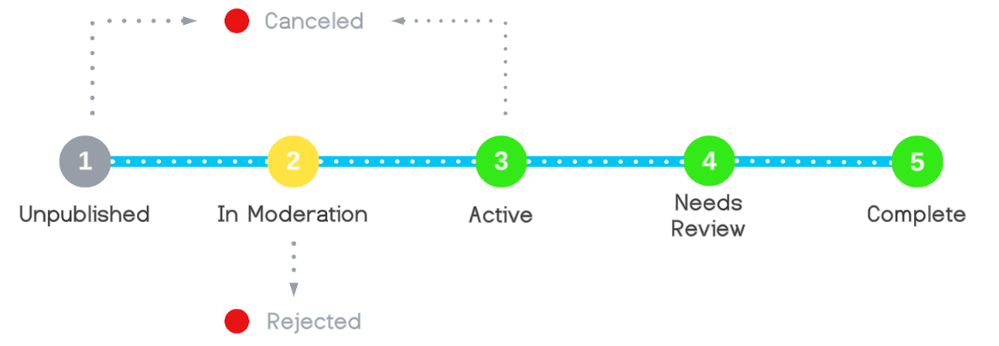

- The lifecycle of A/B tests in Syndigo is a net-new concept and unique to experiments. Every A/B test reflects a distinct status based on its progress through the experiment lifecycle. A/B test statuses may look familiar, but they are not identical to the Enhanced Content publication statuses.

- A/B tests may be either be in one of the operational statuses or terminated statuses.

- Operational statuses are assigned when the experiment is unpublished, in moderation, active, or needs review. These statuses indicate that the A/B test is moving through the stages of the experiment lifecycle and has not yet encountered a termination event.

- Terminated statuses – canceled, rejected, and complete – are assigned when the experiment is rendered invalid or formally concluded. Once assigned to an A/B test, there is no option to change the experiment back to an operational status.

Syndigo Enhanced Content A/B Testing: Experiment Lifecycle and Statuses:

- A/B tests may be either be in one of the operational statuses or terminated statuses.

- Each A/B test may be created for only one Enhanced Content. (1:1 ratio of A/B test to Enhanced Content.) At this time, there is no ability to create Enhanced Content or A/B tests in bulk.

- If the Enhanced Content or A/B testing feature sets are disabled on an account with operational A/B tests, all tests will be canceled automatically by the system. Also, applying an override to an individual feature (test type) within the A/B testing feature set will cancel all operational status experiments of that test type.

- Experiments may be canceled by a technical team member of Syndigo for any reason. In such cases, communication will either precede or follow the event with information about the reason for the cancellation.

- Special considerations for the handling of URLs:

- When associated with A/B tests in most operational statuses, the Enhanced Content feature may not be disabled on URLs from the interface.

- However, URLs associated with experiments may continue to be deleted by Syndigo users.

- There is also no limit to the addition of new URLs to a product undergoing A/B testing – if the added URL is valid, without errors, associated with one of the sites specified in the test configuration, and is EC feature-enabled, then the experiment will be available on the new URL.

Please reference the checklist below to prepare for A/B testing:

- Read the article, Introduction to Enhanced Content A/B Testing.

- Make note of the desired test settings as soon as possible when developing a plan for A/B testing, such as how long the experiment should run, which retailer websites to target, and what actions should be automatically applied when the experiment ends.

- Ready the Enhanced Content to become Content A. This can be existing content that was published earlier and is already in an Approved status or it can be a new Enhanced Content that will be published for the first time as part of the experiment.

- Prepare the assets for the widget (the variable) that will be tested. The assets may be uploaded in advance or during the A/B test creation workflow.

- Please note: The Enhanced Content that becomes Content A may not be modified once the A/B test is in an operational status. To unlock the content for changes, the operational A/B test must be canceled.

- Recipient-specific Enhanced Content continues to override generic collections on specific sites. Analyze all the Enhanced Content associated with a Syndigo product as you prepare to build an A/B test, to ensure any recipient-specific content will not interfere with your experiment plans.

- Linked products continue to function as with non-experiment Enhanced Content. The child products inherit the Enhanced Content from the parent product, and Enhanced Content may only be created and managed from the parent product record. A/B tests configured on the Enhanced Content of the parent product will be inherited by child products.

- Perform a general health check of Enhanced Content for your account:

- Check that the addition of A/B testing will not cause the account to exceed the product, Enhanced Content, or URL limitations of the account.

- All desired Enhanced Content Recipients should be linked within the Syndigo Recipients interface.

- Ideally, the URLs targeted by the experiment will be added on the Syndigo Product, EC feature-enabled, and without errors prior to the A/B test publication. However, if relevant URLs are added or resolved at a later time while the experiment is active, the experiment will be made available on those URLs automatically.

Entry Points and References in Syndigo

Users may gain access to the A/B Testing interface via the Product Details Page, but references to A/B Testing may be found in the other subtabs within the Enhanced Content section of the Product Details Page, the Publication Workflow of the Product Details Page, URLs tab of the Product Details Page, the Enhanced Content Index, the URLs Index, and the Enhanced Content Dashboard.

Additional references to A/B testing may be found in various areas within the Product Details Page, such as the Enhanced Content Hero Image and In-Line layout interfaces, the Publication Workflow and the URLs section. Please see details about each interface below.

Note: In later beta releases, references to A/B Testing will be present in the other areas in Syndigo such as the Enhanced Content Dashboard, the Enhanced Content Index, and the URLs Index.

- Product Details Page > Enhanced Content > A/B Testing Landing Page - The A/B Testing tab is present within the Enhanced Content section of the Product Details Page when the A/B testing feature is granted on the company account.

- When at least one A/B test has been created for the Collection being viewed, the A/B Testing Landing Page lists out the experiment(s) in tile form. Each tile contains test configuration information as well as test status.

- A status filter control is available in the upper right corner of the list which allows users to filter the tiles displayed by the A/B test status.

- Clicking on a test name within a tile will navigate to:

- The A/B test creation workflow if the experiment status is Unpublished (Draft), or

- The A/B Test Details Page if the experiment is in any other status.

- Product Details Page > Enhanced Content > Hero Image or In-Line Layouts - The Hero Image and In-Line interfaces will remain mostly unchanged after the release of the A/B testing feature, with one exception: Once an A/B test has been created, even in draft form, the collection that is serving as the Content A of the experiment cannot be modified. The layout views of such Enhanced Content will be transformed into a read-only state and messaging will be displayed to inform of the restrictions. After the A/B test is complete, the Enhanced Content is released of these restrictions and may be fully managed once again.

- Product Details Page > Enhanced Content Publication Workflow - Enhanced Content associated with an operational A/B test may not be published from the standard Publication Workflow available on the Product Details Page (clicking the Publish button). If the Enhanced Content with an operational A/B test is selected in the Publication modal, the workflow will not allow users to proceed with publication and messaging is displayed to inform of the restrictions.

- Product Details Page > URLs - For any URLs where an A/B test is published and assigned a status of active, in moderation, or unconfirmed, the EC feature may not be disabled. All URLs may be deleted from this interface, however, regardless of association with A/B testing.

- Enhanced Content Index – A column dedicated to A/B testing, along with an associated filter option, has been added to this interface. The A/B Testing column appears adjacent to the Collection Status column, and reflects the status associated with the most recent A/B Testing activity for each Enhanced Content record. The option to filter for values in this column appears in the Collection Content tab of the filter pane. Enhanced Content associated with any operational A/B test may not be published from the standard Publication Workflow on the Enhanced Content Index. If multiple Enhanced Content products are selected for bulk publication, the system will skip over all content associated with operational A/B tests.

- URLs Index – EC feature disablement, whether in bulk or on an individual URL, is not permitted on the URLs Index interface. However, there are no restrictions on the deletion of URLs from the Index.

- Enhanced Content Dashboard – The tile located to the right of Site Information now serves as a home for A/B Testing information. Announcements have been moved to the bottom of the Dashboard.

- When at least one experiment has been created on the account, the A/B Testing tile serves as an index of non-terminated status A/B tests. Each non-terminated test is listed in a table, and clicking on the name of any record in this table will navigate to the test details page for the given experiment.

- When there are no tests created yet or the A/B testing feature has been deactivated on the account, this tile contains some general information and a hyperlink to the A/B Testing help articles.

Build an Experiment

There are two A/B test templates available:

- Add a Widget - Using the “Add a Widget” template ensures that content versions A and B are identical in all aspects with a single exception – content B includes one additional widget that is not present in content A. By ensuring there is no more than one difference between the content versions tested, this allows for any differences in shopper behavior to be attributable to the presence of the single added widget.

- Custom Uncontrolled Experiment - Using the “Custom Uncontrolled Experiment” template allows for the design of Content B to differ from Content A in any number of ways with no limit enforced by the interface. This is the only method to build test cases such as:

- Old brand creative versus new brand creative to quantify the ROI on brand refreshes

- Reordering widgets to compare performance when engaging content is higher up in the viewport

- Optimizing hotspot placement and assets

The same high-level KPIs are calculated and presented regardless of whether an A/B test is a controlled, “Add a Widget” test type or an uncontrolled experiment. However, as deeper level metrics are unveiled over time, only controlled A/B tests will qualify for the more advanced insights that attribute shopper behavior to the presence of specific content or creative assets. The Syndigo attribution model will not apply to uncontrolled experiments due to the potential to introduce multiple variables between the two content versions tested.

Note: Before proceeding further in these instructions, please complete the checklist found in section, "Before you Begin."

The process to build any enhanced content A/B test is as follows:

- Navigate to the Enhanced Content that will become Content A.

- Click the Create New Test button on the A/B Testing Landing Page.

- Input the test configuration details.

- Design the Content B (where saving a draft of the A/B test also occurs).

- Review and Publish the A/B test.

The detailed steps within this process may vary slightly depending on the chosen template, but the primary workflow is as follows:

Step 1: Navigate to the Enhanced Content that will become Content A.

Content A must be a new or existing Enhanced Content in the Syndigo product record. All of the following conditions must be met for an Enhanced Content to be eligible to serve as the Content A of an A/B test:

- The publication status is not In Review for any sites in the collection, including the websites awaiting recipient moderation.

- All required information and valid assets were provided in the widgets and there are no errors present.

- The content is not currently associated with any operational A/B test.

If any of the above conditions are not met, the Enhanced Content does not qualify to become a Content A. The Create New Test button is presented in a non-clickable state on the A/B Testing Landing Page, preventing users from initiating the workflow.

Step 2: Click the Create New Test button on the A/B Testing Landing page.

- A user may only create a new A/B test for an Enhanced Content that is either associated with no A/B tests or for which all previously-designed A/B tests have been terminated. If the system detects an existing, operational A/B test, the Create New Test button is presented in a non-clickable state on the A/B Testing Landing page. This measure was put in place to prevent multiple A/B Tests from running simultaneously on the same sites, which may skew and impact the experiment results negatively.

- The Create New Test button is located on the A/B Testing Landing page. Confirm that the Enhanced Content name reflected in the collection selector control located in the upper left corner of the page is the correct one for which the A/B test is to be created. For products with multiple Enhanced Content collections, click on the collection selector to reveal a dropdown that allows you to choose any Enhanced Content in the product. Only one collection may be selected in this tool at a time, as the A/B Testing Landing page presents information for only the single, selected collection.

- If the selected Enhanced Content qualifies for the creation of a new experiment, users may click Create New Test.

Step 3: Input test configuration details.

Upon clicking the Create New Test button, the Test Configuration Modal is displayed. The following settings are available in this modal. Required settings are indicated with an asterisk:

- Selection of test type*

- Only one option may be selected from the available options. As test types are added to the A/B testing feature, additional options will be presented.

- Choosing the “Custom uncontrolled experiment” option will reveal additional messaging and it is required to acknowledge the information presented at this time. Click on the checkbox to acknowledge the statement.

- Test name*

- Ideally, this name should be unique and help users within the organization easily identify the key traits of the experiment, such as the product or product set, brand, and widget information.

- There is a limit of 100 characters, and both standard text and special characters are accepted.

- Selection of sites where the experiment will be published*

- The retailer websites available in this dropdown menu are:

- Associated with the account’s linked Enhanced Content Recipients,

- Included in the list of sites owned by the Recipients targeted by the Enhanced Content (based on locale and language), and

- Configured by a Syndigo admin user to accept experiments.

- Only the Content A will be published to any sites that are left unselected in this configuration. The experiment will only be active on the sites selected here.

- The retailer websites available in this dropdown menu are:

- Test duration*

- This setting determines when the experiment will stop running and present the results for review.

- There may be default options available to have the experiment run for a set number of weeks. There is also a custom duration option that allows the user to specify the end date. The experiment may be configured to stop running and present results when:

- The end date has passed, or

- The algorithm has declared the experiment results to be statistically significant.

- Users to receive email communications about this A/B test

- This is the only optional field in the configuration modal. The user may select other users within their organization’s account or enter email addresses manually to receive email communications about the start and end of the experiment. The user creating the test will always receive the email notifications automatically, without having to add their email address here.

- What the system should do with the Enhanced Content when the experiment stops running and results are ready for review*

- The “manual” option results in Content A being published automatically to all sites targeted by the Enhanced Content locale and language when the experiment stops running. When reviewing the results, a user may then choose whether to keep Content A or switch to Content B and mark the experiment as complete.

- With the option to automatically apply the winning content, the system may publish Content A or Content B to all sites when the experiment stops running, depending on whether the algorithm declares statistical significance and determines a winning content version. A user may still switch contents manually when reviewing the results.

Step 4: Design the Content B and save the A/B Test.

Designing the Content B for an Add a Widget experiment:

When building an Add a Widget A/B test, this stage requires the creation of a new widget that will be present only in Content B:

- Note the widgets that are already populated in the interface. They are identical to the widgets found in the collection serving as Content A in this test, and are locked down into the read-only state such that they can not be modified in any way.

- Click the Add New Widget button to select the desired widget type to be added to Content B. Please select the correct widget type in this step, as widget type can not be modified at a later stage.

- The standard widget details modal, the same tool that is utilized when managing Enhanced Content outside of A/B testing, will appear. The widget details may be inputted now, or the unconfigured widget may be added immediately to be completed at a later time. Click the Add Widget button in the lower right corner of the modal to proceed with either option. Note: Please ensure the correct widget type is selected in this step prior to clicking the Add Widget button in the modal. If the incorrect widget type was selected, close this modal to return to the interface where the Add New Widget button can be clicked again and the correct widget type may be selected.

- The new, added widget will be reflected in the layout(s) at the bottom of the other widgets. The actions that may be applied to this widget are as follows:

- The added widget may be moved anywhere within the list of existing widgets. The existing widgets that are identical to those in Content A may not be modified in any way.

- The widget details can be modified further. Click the Actions button located within the added widget to reveal the Edit option in the menu. Choosing Edit will display the standard widget details modal, where changes may be applied.

- The added widget may be deactivated in one of the two layouts (Hero Image or In-line) if desired. Take care not to deactivate the added widget in both layouts, as this will render the A/B Test invalid.

Please Note: Users may only add a widget in the layout(s) of Content B where there is at least one widget in Content A. For example, if the Enhanced Content A has content in both the Hero Image and In-Line layouts, then the new widget may be included on either both layouts or only one of the two layouts in Content B. If Content A only includes widgets in one layout and not the other, the Include On layout controls in the tool will be locked down to prevent the accidental addition of the new widget to the irrelevant layout.

Designing the Content B for a Custom Uncontrolled Experiment:

Unlike with a controlled template, such as Add a Widget, the user interface does not provide guidance on the order of operations to design the Content B of this an uncontrolled experiment. The platform navigates directly to the Content B layout tools where widgets may be added, deleted, and modified freely using the same controls utilized in building standard enhanced content today.

Manage the layout of Content B and save the A/B Test

The following actions are available on this page:

- Save the A/B test as a draft

- Modify the design of Content B

- Cancel the experiment (terminated)

- Modify the test configurations further

- Navigate to the final step of the workflow – Review

Saving the A/B test - This moment in the workflow serves as the first opportunity to save all progress. Exiting from the A/B test creation workflow prior to saving will result in the loss of all configurations.

The Save Changes button must be clicked before the user is permitted to navigate forward in the workflow. If the user attempts to move forward to Review without saving changes, additional guidance is provided.

Once the draft is saved, the A/B test is assigned the status Unpublished, which is an operational status. As a reminder, when an A/B test is assigned an operational status, restrictions are applied in the interface that prevent modification to the Enhanced Content and associated URLs. Any user within the organization may see the Unpublished A/B test appear in the Syndigo product and may access the draft to continue in the experiment creation workflow.

Modifying the design of Content B – As with standard, non-experiment Enhanced Content in Syndigo today, the content may be modified further from this interface. All required text, specifications, and assets should be provided at this time.

Widget order in the layout of a controlled experiment: As the widgets that Content B shares with Content A must remain identical, they are shown in a read-only state. In an Add a Widget experiment, only the newly added widget can move up or down to any placement in the layout, whereas the general order of the read-only widgets is otherwise preserved.

Canceling the A/B test - It is not possible to fully delete or discard an experiment once it is saved, but the termination status Canceled may be assigned to any operational A/B test. Clicking the cancel button available on this page will result in the experiment status transitioning immediately from Unpublished to Canceled. Canceled status tests are saved permanently and details may be accessed at any time in the future.

Modifying test settings from the configuration modal - An Edit Test Settings option is available on this screen to present the initial configuration modal again, where settings may be changed. Note: The experiment type may not be changed, and removal of sites after variable content has been created will result in loss of progress made toward defining content unique to those sites.

**Please note the following regarding the return to the A/B test creation workflow of an Unpublished experiment** If either of the following test settings has become invalid, a warning message is presented on this page with guidance to correct the invalid settings in the configuration modal:

- There are no sites remaining that are valid (associated with a linked Enhanced Content Recipient, etc.), or

- The specified end date has passed.

Navigate to the final stage of the workflow, “Review” - Once Content B is complete and the A/B test is saved, the Review button may be clicked to navigate to the next and final step of the A/B test creation experience.

Step 5: Review and publish the A/B test

The final step in the A/B test creation experience is to review the experiment details and publish the Enhanced Content with the experiment now attached. The layout view of Content A is presented side-by-side with Content B, and a Preview button is available by each.

The actions available on the Review interface are as follows:

- Return to the previous step in the workflow to modify Content B.

- Cancel the A/B test.

- View previews of Content A and Content B.

- Publish the A/B test, which results in submission of the Enhanced Content experiment to Syndigo Quality Assurance to review.

Experiment Begins

Once the content has been approved through the Enhanced Content Moderations process, the experiment status transitions to Active, which means the Enhanced Content with the A/B test now attached becomes available on all valid product page URLs.

As with all published Enhanced Content, Syndigo collects data that is translated into valuable metrics available in reports. For A/B tests, this data is further broken down to distinguish between Content A and Content B.

Which visitors are included in the experiment?

It is important to note that only visitors who qualify as new visitors will be included in the A/B Test tracking and results. New visitors are defined as customers who did not previously view Enhanced Content on the product URLs in the past twelve months. Existing visitors will continue to see the Enhanced Content as only Content A and their interactions and conversions do not impact the experiment results. If an existing visitor clears their browser cache or visits the page on a new device, it is possible that the sessions they initiate will then be recognized as a new visitor and included in the experiment.

Experiment In Progress

At any time during the course of the experiment, users may click into the A/B Testing Details Page from the A/B Testing Landing Page. This view displays the test configuration details and previews of Content A and Content B, in addition to a Test Progress section which updates once daily to reflect how the experiment is progressing toward the duration criteria specified in the initial test configuration.

The content on the A/B Testing Details Page when the test status is Active is as follows:

- The actions available at this stage are:

- Export – please reference the final section of this article, Export Experiment Data, for details about this action.

- Cancel Test – which will result in the immediate termination of the experiment and transition the test to the permanent state of “Canceled”.

- The Header section reflects the test configuration details exactly as presented in the Build workflow, but the button to edit these settings is not available anymore.

- Test Progress is a section unique to the A/B Testing Details Page when the test status is Active. The content displayed in this section is determined by the experiment termination criteria specified in the test configuration.

- The layout review section is identical to what is included on the Review screen of the creation workflow. Changes may not be made in this view as all elements are in read-only form on this screen.

Preview links are available for each Content. Clicking on a Preview link will open the Content A or Content B preview in a new browser tab.

Experiment Results Ready for Review

Recommended Pre-Reading: Understanding A/B Test Results

When the experiment stops running and results are available to be reviewed, one the following scenarios will be presented in the Results section now available on the A/B Testing Details Page:

Scenario 1: The experiment reached statistical significance and Content A is declared the winner. Content A is always published across all sites in the collection, regardless of how the test is configured.

Scenario 2: The experiment reached statistical significance and Content B is declared the winner. If the A/B test was configured for automatic publication of the winning content, Content A is published across all sites in the collection. If the A/B test as configured for manual publication of winning content, Content A is published across all sites in the collection (even though B is the winner).

Scenario 3: The experiment did not reach statistical significance, as less-than-optimal statistical confidence was calculated. This means that there were not enough visitors to the URLs where the experiment was running. The test should be reproduced and configured to run for a longer period of time or until statistical significance is reached in order to collect more data. Content A is published across all sites in the Collection.

Scenario 4: The experiment reaches statistical significance, but it declares that neither Content A nor B is the winner. This scenario indicates that enough data was collected for the algorithm to have a high level of confidence that there is no discernible difference in results between Content A and Content B. The most likely cause of this declaration is that the Enhanced Content does not differ enough between A and B to be perceivable to visitors. Content A is published across all sites in the Collection.

The Results section also presents an option to switch the content version that is currently displayed on all pages across the collection. Clicking on the action to switch content versions will trigger the Confirm and Complete Test workflow, which is explained further in the next section of this walkthrough.

Experiment Conclusion: Mark as Complete and Other Available Actions

The actions available when results are ready to review are as follows:

- Mark the test as confirmed and complete (required)

- Extend the test (optional)

- Export results - please reference the final section of this article, Export Experiment Data, for details about this action.

Confirm and Complete the A/B Test:

A user at the organization must review the results of each A/B test, choose which content version should be saved, and manually mark the experiment as complete for the experiment to formally conclude. This is achieved through the Confirm and Complete Test action, which is required in order to “unlock” the Enhanced Content and URLs and transition the A/B test to the termination status of Complete.

The process to mark the A/B test complete is as follows:

- Review the results available and make note of the Content version, A or B, which is currently published to all sites.

- Either click on the text to switch content versions located under the primary message of the Results section or click the Confirm and Complete Test button in the top right corner of the page.

- Confirm one action that should be applied to the Enhanced Content at this time:

- Keep the currently published content version published on all sites and discard the other content version,

- Switch to the other content version, publish it to all sites, and discard the currently published content version, or

- Switch to the other content version, save it to the Enhanced Content but do not publish to sites at this time, and discard the currently published content version.

- The final step is to confirm the A/B test results, which brings the experiment to its formal conclusion. This action cannot be undone. Once the test is assigned the status Complete:

- The content version which was not selected in the previous step is discarded permanently,

- The experiment cannot be made operational again,

- The A/B test details will continue to be accessible in the future for reference purposes only, and

- All restrictions on the Enhanced Content and associated URLs are lifted so that users may manage them outside the context of A/B tests – including the ability to create a new experiment for the Enhanced Content.

Extend the A/B Test:

An A/B test may be reactivated to continue to run for a longer period of time and collect more data. This action is highly recommended if less than 3,000 unique visits have been collected to date and the probability reflected in the outcome statement of the test is less than 80 – 90%. (See the article Understanding A/B Test Results for more information about probability.)

Clicking the Extend Test button on the details page will reveal a modal where a new, future end date may inputted – either typed into the field following the standard date format MM/DD/YYYY, or be selected from the calendar tool. It is recommended to select an end date far enough into the future to ensure that a total of 3,000 – 5,000 unique shoppers will be qualified into the experiment. Less than one week into the future is not generally recommended.

Proceeding forward in the modal after inputting a valid new end date will result in the immediate transition of the A/B test status from “Needs Review” back to “Active”. An email notification will be sent to all configured email addresses when the new end date nears.

Export Experiment Data

Starting with the first day that the A/B Test status is active and shoppers are qualified into the experiment, the underlying raw data is available for export as an .XLSX file. This same export functionality remains available in the interface indefinitely after the test concludes or even if the test was canceled after having collected data.

Clicking the Export button will reveal a modal where one of three options may be selected for the export:

- URL Level

- Widget Level

- Asset Level

These options refer to the unique aggregation levels available for A/B testing data. Please reference the article Understanding A/B Test Results for detailed information about the aggregations and metric definitions.

Only one export may be selected at a time in this modal, and proceeding forward will initiate a direct download of the .xlsx file with the associated raw data.